- Charles Sturt PhD student co-authors article on LGBTIQA+ exclusion in the development and use of robotics and artificial intelligence (AI)

- Mr Adam Poulsen co-wrote article to draw attention to potential discrimination and biases against LGBTIQA+ community in robotics and AI

- Port Macquarie student is working on PhD project researching how to design robots to better meet the needs and values of LGBTIQA+ elders

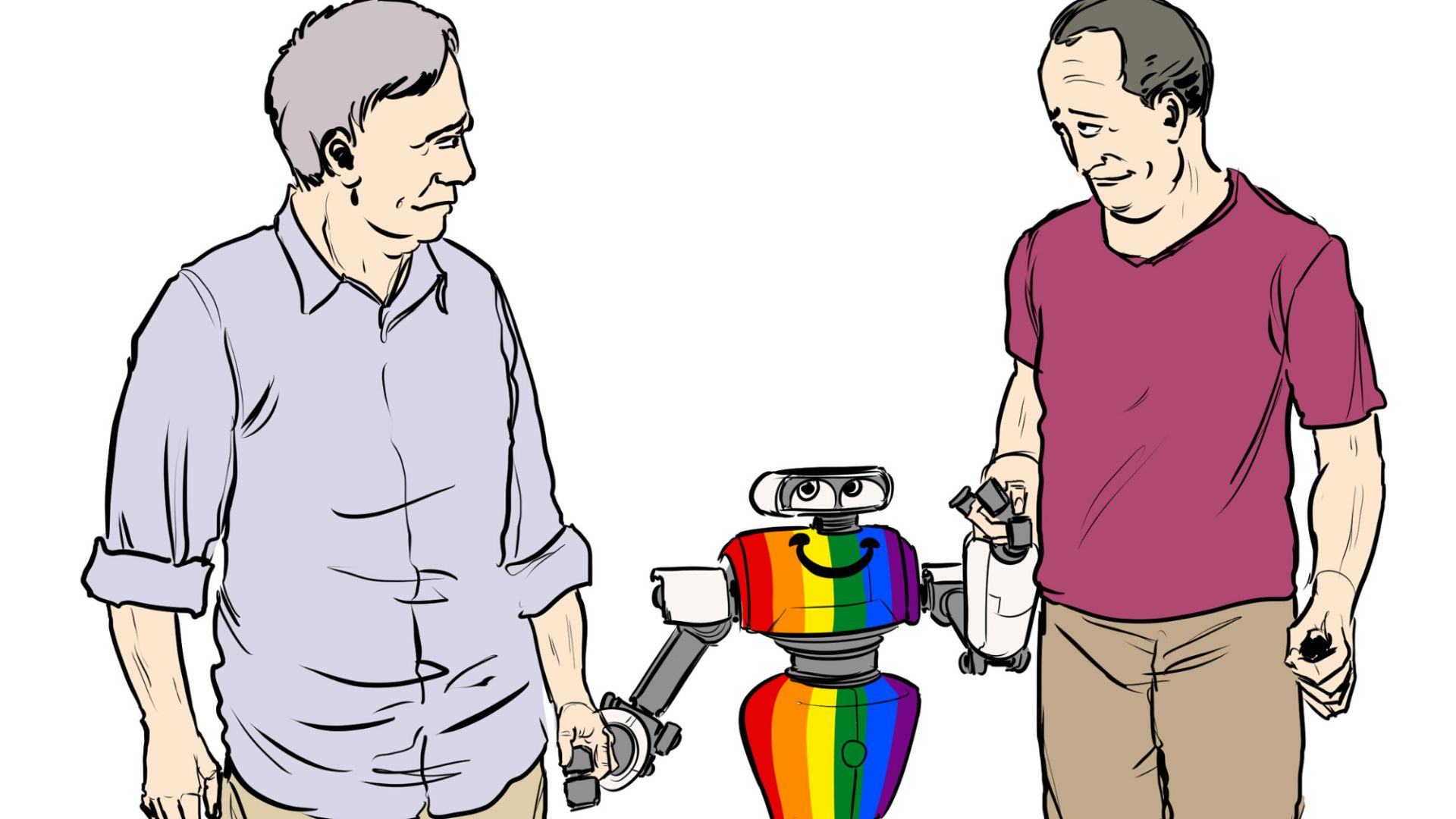

A Charles Sturt University PhD student from Port Macquarie has contributed to a new article that shines a light on issues of LGBTIQA+ inclusion and potential biases in the development and use of robotics and artificial intelligence (AI).

The article, ‘Queering Machines’, was published last week in the journal Nature Machine Intelligence and co-authored by Charles Sturt PhD candidate in the School of Computing & Mathematics Mr Adam Poulsen, Dr Eduard Fosch-Villaronga from Leiden University, and Dr Roger Andre Søraa from the Norwegian University of Science and Technology.

Mr Poulsen (pictured) said discrimination and bias are known to be implicit problems of many AI applications, and the article was written to explore how this impacts the LGBTIQA+ community.

Mr Poulsen (pictured) said discrimination and bias are known to be implicit problems of many AI applications, and the article was written to explore how this impacts the LGBTIQA+ community.

“Understanding how machines affect the LGBTIQA+ community appears largely underexplored in scientific literature, but there is research that shows the perspectives of members in the LGBTIQA+ community were not considered in the design of specific AI applications,” Mr Poulsen said.

“We wrote the piece to draw attention to these issues and talk about the importance of including the LGBTIQA+ community in the development of robots and AI to avoid potential discrimination and exacerbation of existing biases.

“If LGBTIQA+ persons continue to be excluded, robot and AI developers won’t understand how these technologies may affect the LGBTIQA+ community and it can even hinder their free speech.

“Our article actually references a 2019 study which found this was the case.”

According to Mr Poulsen, the 2019 study found that several drag queens’ Twitter accounts were being flagged by an AI tool as having high toxicity levels because the tool did not understand the context of the content it was measuring.

In Mr Poulsen and his co-authors’ article, they call for more research into how robot and AI development impacts the LGBTIQA+ community and for more strategies and policies that acknowledge the importance of LGBTIQA+ inclusivity, diversity, and non-discrimination.

“It is important to push for initiatives that address diversity in robotics and AI development and use, otherwise LGBTIQA+ groups will remain mostly invisible and silenced,” Mr Poulsen said.

“Robotics and AI have the ability to both disempower and empower the LGBTIQA+ community. We need to ensure it empowers.”

Mr Poulsen’s current PhD study is aiming to help empower older LGBTIQA+ persons by researching how care robots can be programmed to facilitate social interaction and alleviate the loneliness experienced by some in the ageing LGBTIQA+ community.

“My study is about learning first-hand how we can design robots to better meet the needs and values of LGBTIQA+ elders,” Mr Poulsen said.

“For example, it might be valuable to reprogram an LGBTIQA+ elder’s care robot so it doesn’t ask if your gender is male or female, because not everyone identifies as one or the other.

“Design considerations like this for robots and AI foster inclusion and provide an innovative advance toward equity for the broader LGBTIQA+ community, particularly for older adults at risk of social isolation and subsequent experiences of loneliness.”

Social

Explore the world of social